[This is an update and repost of an older post, which had a different format back then]

ABSTRACT

“As we approach the thirtieth anniversary of the Challenger Disaster (January 28, 2016), how do we continue to educate current and future leaders on how to make decisions that involve significant risk and uncertainty with the lessons of Challenger in mind?

To address this challenge we have created a case that places decision makers in a situation of risky choice. The case uses but masks the facts of the Challenger launch decision in the premise of a Coast Guard response to a wrecked cruise ship that is leaking oil. The protagonist in the case, Captain Bob Ross, a Coast Guard pilot, has to decide whether or not to send immediately the one Hercules aircraft he has available to move the oil-pollution equipment. Captain Ross faces pressure to send the flight and uncertainty regarding recent engine problems that may or may not be temperature related.

We collected decision responses from participants for multiple versions of the case to see how different pieces of additional information can influence decisions. We demonstrate that both additional information regarding the worst case consequences and additional information regarding the relationship between temperature and seal failure will make decision makers less likely to send the flight.

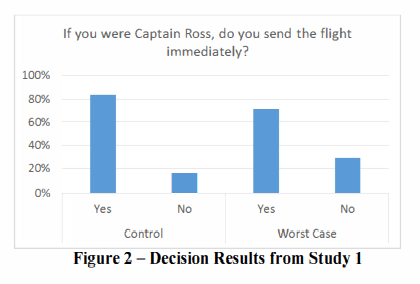

While these conditions are less likely than the base-case description, less likely is still high as around 70% (depending on condition) send the flight even with additional information. For the base-case description, 83.5% sent the flight. We conclude with discussion of the insights for organizational learning that can be gained from a case discussion of a decision similar to that faced by the Challenger managers but in a context different than Challenger.”

*****

My comments:

This study used the details of the NASA Challenger disaster and reframed it as an aviation case study.

The case study is essentially that Capt Bob Ross, who is a Coast Guard pilot, has to decide whether to send the only available aircraft immediately with oil-pollution equipment to help a cruise ship which has run aground and is leaking oil.

The reader is then provided info on the aircraft engine refurbishment schedule, which during the last inspection showed some damage to shaft seals. Different stakeholder perspectives are given on the significance of the seal damage, eg senior engine mechanic believes the damage is related to the cold, but another stakeholder thinks it’s related to the pilots.

The worst case scenario, which is cited below, means that if significant engine issues occur during the rescue mission then the aircraft will have to be ditched in the ocean. Extreme water temperatures makes this a dangerous proposition. Ambient temperatures and power settings of take-off are provided to the reader.

Some of the findings included:

· “Participants in the worst case condition were less likely to send the mission. They reported lower feelings regarding their willingness to take risks and were generally less optimistic. These measures explain participants less risky decision choices in the condition that emphasizes the worst case conditions” (p4)

· “In too many future situations, there will be difficult tradeoffs that involve highly risky gambles. As we have shown, when the details are masked as a different event, in this case, a Coast Guard aircraft, in the base case scenario, 83.5% of participants sent the flight immediately. With two interventions, emphasizing the worst case consequences and providing additional information, this percentage is reduced to around 70%.” (pg. 5)

· That is, even though emphasising the worst case consequences did have a statistically significant impact, this change was modest from 83.5% to 71%; the latter highlighting that close to ¾ of people still sent the mission

· When given the opportunity to be provided with additional information (in this case, additional information on temperatures which are potentially important in estimating the risk for damage to seals), nearly 60% offered to take additional info. However, even with additional info, 69.7% of people still sent the flight immediately

· Even with additional info can be offered at little cost (except the time to examine the info), 40% still chose not to take the info. It does emphasise that in emergency situations that decision makers need the best information in the clearest and easiest form without them needing to search for it. Those that declined the info were presumed to have already made up their minds.

· On the former, the authors said: “These participants represent the unfortunate reality that when managers have made their decisions even in conditions of significant uncertainty, most will stop searching for additional information even if additional information could change the decision” (p5)

· “The next step is ensuring that managers are hearing the right kind of questions, are calibrating risk trades appropriately and listening to the right combination of views when making decisions. In this way, lessons from Challenger will not only improve very similar decisions but could improve managerial decision making across the board whether of a technical, safety or other high stakes nature” (pp. 7-8).

This paper, in conjunction with a number of other papers from the same author and colleagues highlights that safety information and near misses may not have the expected impact on decision making. In some cases, near misses can increase the propensity for risky decisions since a near miss can be reinterpreted as a near success (a barely successful outcome due to good management, systems, process and/or luck) rather than as a near failure.

Authors: Dillon, R.L. (2015). New ways to learn from the Challenger Disaster: Almost 30 years later. Aerospace Conference, IEEE, 1-8. DOI: 10.1109/AERO.2015.7118898

Study link: http://dx.doi.org/10.1109/AERO.2015.7118898

Link to the LinkedIn article: https://www.linkedin.com/pulse/new-ways-learn-from-challenger-disaster-almost-30-years-hutchinson