A really interesting 2000 paper from James Reason exploring some apparent paradoxes in safety.

A paradox is “a statement contrary to received opinion; seemingly absurd though perhaps well-founded” (p3).

The pursuit of safety “abounds with paradox”, and in modern complex sociotechnical systems, things aren’t always what they seem. That is, some beliefs and traditions in safety may not actually be helping us to deliver safe and reliable work.

These paradoxes straddle the edge of safe performance – more mature organisations are better informed of where the edge may be without necessarily falling over it. It’s said that “The ‘edge’ lies between relative safety and unacceptable danger. In many industries, proximity to the ‘edge’ is the zone of greatest peril and also of greatest profit” (p3).

There’s too much gold in this paper to do it justice – hence I’ve skipped heaps of it. I’ve also skipped most of the framing around HRO and safety culture (mostly to avoid distractions like “[circle: there is / there isn’t] such a thing as safety culture and it [circle: may / may not] be relevant to this discussion” or with HRO ideas).

Reason argues that most of these apparent paradoxes result from straddling the line between performance and safety and that, to date, most knowledge and research in safety has resulted from an extremely biased sample.

That is, safety is obsessed with the infrequent negative outcomes (incidents, injuries, near misses etc.) – what Reason calls the “negative face” of safety. The negative sides of safety, accidents etc., are “conspicuous and readily quantifiable occurrences [and it’s therefore unsurprising that] this darker face has occupied so much of our attention and shaped so many of our beliefs about safety” (p4).

In contrast, the paradoxes discussed by Reason result more from understanding the positive face of safety – the secretive and intrinsic resistance a system has towards hazards.

Like how healthcare knows more about “pathology than health”, safety science knows more about the links with bad events and human activity rather than how human activity and organisational processes normally maintain expected performance.

This imbalance therefore creates these paradoxes.

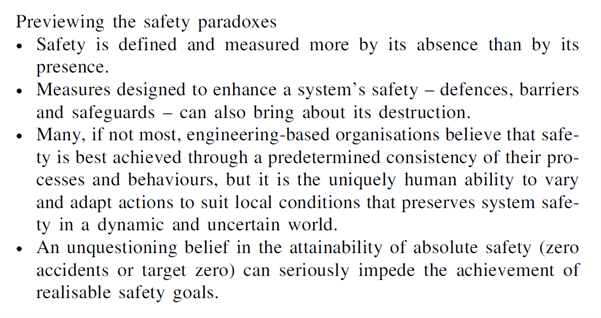

The paradoxes are highlighted below:

Paradox 1: How safety is defined and assessed

Key points here include:

- Dictionary definitions of safety say freedom from danger and risks, which Reason says “comprises ‘unsafety’ than about the substantive properties of safety itself” (p4)

- Problematically, safety is often measured by its occasional absences (TRIFR etc.) more than it’s usual presence [and what that presence looks like]

- Incident metrics are “flawed”. First, he argues that the relationship between the metrics and negative outcomes are tenuous at best. Also, chance plays a huge part bad events and more so in complex and well-defended technologies

- Second, “Even the most resistant organisations can suffer a bad accident” (p5) and in contrast “even the most vulnerable systems can evade disaster, at least for a time” (p5). He says chance doesn’t take sides – it strikes the deserving and preserves the unworthy

- Another issue is the pace of diminishing returns. Organisations focus heavily on safety activity and negative outcome data declines rapidly and then bottoms out at an asymptote value. In ultra-safe and mature organisations when the plateau has been reached, “periodic variations in accident rates contain more noise than valid safety signals” and “negative outcome data are a poor indication of its ability to withstand adverse events in the future” (p5)

- By reducing accidents to very low levels, such organisations have “largely run out of ‘navigational aids’ by which to steer towards some safer state” (p5) by relying on these negative outcome measures

- Getting from bad to average is quite easy for an organisation but getting to average to excellent is extremely difficult – and the latter is why understanding this paradox is crucial

- Finally, while high accident rates may be a decent indicator of a “bad safety state” [** or good reporting], low asymptotic rates aren’t necessarily signal of a good safety state.

Paradox 2: dangerous defences

Key arguments:

- Measures designed to enhance system safety “can also bring about its destruction” (p6)

- Some examples are given like with Chernobyl having its origins during the testing an electrical safety device; modern automation which tried to eliminate opportunities for human error actually creating new types of operator mode confusions which “can be more dangerous than the slips and lapses it was intended to avoid (6)

- Another example relates to maintenance work. It’s intended to repair and forestall issues down the track but introduces significant risk due to maintenance errors (in which maintenance is often riddled with error traps, since plant design was more focused on overall platform safety and reliability and for the end-user rather than the people constructing or maintaining it)

- A trade-off problem. Organisations are concerned with producing products or services – not to have “absolute” safety. Thus, there’s usually a tension between production and protection. Both make demands on resources and both are essential, but it’s production that pays the bills.

- Nicely, he argues that “information relating to the pursuit of productive goals is continuous, credible and compelling, while the information relating to protection is discontinuous, often unreliable, and only intermittently compelling (i.e.,after a bad event)” (p7)

- A control problem. Organisations have a tension about how to think about and restrict the “enormous variability of human behaviour” (p7). Issues relate to excessive focus on procedures. These are feed-forward control devices, designed at one time and place and meant to project performance at a later time and place. Procedures and processes thus struggle with local variations (like all control systems)

- A common situation is when procedures “are unworkable, incomprehensible or simply wrong” (p8)

- Human performance can be seen as moral issues warranting sanctions. But most of the time, “punishing people does not eliminate the systemic causes” relating to behaviours

- Further, “by isolating individual actions from their local context, it can impede their discovery (p8)

- The opacity problem. Defences can both protect against dangers but also create and conceal dangers.

- For instance, increasing diversity and redundancy of defences “

- Defences-in-depth are created by diversity and redundancy “makes the system more opaque to those who manage and control it” (p9)

- The opacity problem takes different forms. For instance, operator and maintenance failures can remain hidden because they’re caught and concealed by multiple backups

- Concealing allows latent conditions to accumulate over time and thus increasing the severity of failure when it strikes

- Increasing the complexity of interactions between layers of defences can increase the likelihood of common-mode failures and also result in a false sense of safety

Paradox 3: consistency vs variability

Commonly managers may attribute human variability as unwanted. And like technical unreliability, the solution is seen to be greater consistency of human action

However, “human variability in the form of moment-to-moment adaptations and adjustments to changing events is also what preserves system safety in an uncertain and dynamic world” (p10)

Therefore the paradox is by “striving to constrain human variability, they are also undermining one the system’s most important safeguards” (p10)

This is nicely encapsulated by Weick’s “dynamic non-event”. Safety is dynamic because it remains under control by human compensations and it’s a non-event because “safe outcomes claim little or no attention” (p10)

That is, accidents are salient and visible whereas non-events [normal work] generally aren’t. He notes that “Almost all of our methodological tools are geared to investigating adverse events. Very few of them are suited to creating an understanding of why timely adjustments are necessary to achieve successful outcomes in an uncertain and dynamic world” (p10)

Paradox 4: target zero

I’ve skipped a lot of this section, but this relates to the target zero model of “negative production”. It’s easy to sympathise with target zero since we don’t want people hurt

But Reason argues that this well-intentioned mantra “conveys a potentially dangerous misrepresentation of the nature of the struggle for safety” (p11). Safety isn’t something with a decisive victory, but more an “endless guerrilla conflict” without a decisive victory but “merely a workable survival that will allow them to achieve their productive goals for as long as possible” (p10)

Reason then discusses the implications of these paradoxes and relates them to HRO and culture. I’ve skipped all this.

Authors: Reason, J. (2000). Injury Control and Safety Promotion, 7(1), 3-14.

Study link: https://doi.org/10.1076/1566-0974(200003)7:1;1-V;FT003

Link to the LinkedIn article: https://www.linkedin.com/pulse/safety-paradoxes-culture-ben-hutchinson

One thought on “Safety paradoxes and safety culture”