This paper discussed different types of drift and applied them to the case of railway accidents.

It was a fascinating paper, but a real challenge to summarise. I suggest you read the full paper if you’re interested in the topic as I can’t do this justice.

Note. This summary is very fragmented. This is because the paper is just so big, with so much info, I couldn’t cover most of it. Hence, I had to jump to disparate sections just to finish it.

Providing background:

· The paper was motivated by the author’s concern that “the ‘‘drift’’ metaphor may trigger stereotyped responses to accidents such as recurring requirements for better change management”

· For the author, the drift metaphor “accounts too easily for a broad range of accidents and that it requires too little analysis to generate safety management measures addressing drift. As a consequence, the ‘‘drift’’ metaphor could lead to stereotyped accident analyses and safety management actions, and fail to promote the search for alternative accident accounts and safety management strategies” (p110)

· Therefore, drift metaphors could lead to drives for generic approaches to safety management over the adoption of practices to specific systems, thereby leading to a “marginalisation of system-specific safety knowledge”

· Drift has different definitions in safety, and different connotations in everyday life. In safety, the drift metaphor suggests ‘‘navigating the safety space [to] determine the safety as a whole’’ (p110)

Literature on drift can simplistically be divided into four theoretical perspectives:

1) drift as gradual adaptation of behaviour based on local optimisation,

2) epistemological drift,

3) drift as an implication of complexity, and

4) drift and power

1) Drift as gradual adaption represents the migration of organisational behaviour to the boundary of acceptable risk. This takes many forms but often involves actors striving for local optimisation, e.g. adaptations they perceive as optimal, but possibly disconnected from overall system goals

On the above, improving safety based on this perspective involves elucidating the boundary. This can be accomplished by (p110): a) increasing the sensitivity of actors for the boundary of loss-of-control, (2) providing indicators or pre-warnings of the approach to the boundary or (3) making the boundaries touchable and reversible, e.g. by teaching boundary characteristics and coping strategies

Nevertheless, over time system pressures will “‘‘retrain’’ the actors to apply short cuts, since many shortcuts work well most of the time” (p110); moreover, habituation will attenuate the saliency of warnings over time that the boundary if being breached

This view of drift aligns with Snook’s model of practical drift.

The author relates the perspective to Perrow’s tight and loose coupling. It’s said that rules needed to handle exceptional tightly coupled system states are vulnerable to adaptations during the long periods when the system is more loosely coupled. This is when people are likely to see rules as needlessly onerous.

An accident may occur here following drift during this loosely coupled period, when the system suddenly changes to tightly coupled.

2) Epistemological drift. This perspective aligns with the work of Turner and Vaughan and concerns the understanding of a system within an organisation and how it may change or fail to change in ways contributing to an accident.

Based on Turner, information in organisations may stop or become disjointed, the company may focus on irrelevant issues (which in hindsight distracted time away from preventing the accident—what Turner called decoy phenomena).

Thus, this drift “takes the form of an unnoticed accumulation of events that are at odds with the culturally accepted beliefs about hazards” (p111).

Similarly, Vaughan’s work highlighted how repeated anomalies were institutionally rationalised over time. Structural secrecy allowed “this epistemological drift to carry on unchecked. ‘‘Structural secrecy’’ refers to ‘‘the way that patterns of information, organisational structure, processes, and transactions, and the structure of regulatory relations systematically undermine the attempt to know and interpret situations in all organisations” (p111).

A study from Bourrier looked at adaptations and rules between a French and two American nuclear plant. Whereas the French plant was marked by the departure of formal procedures, the US plants had a high degree of compliance. Workers at the French plant undertook local modifications or adjustments to some procedures and ignored others. They didn’t disclose the changes for fear of reprisal.

Hence, the consequence was behavioural drift combined with epistemological drift, since “the plant management had inaccurate knowledge of how the plant was maintained and about the technical state of the plant” (p111).

3. Drift as an implication of complexity. This perspective draws on the work from Hollnagel, Dekker and others. For one, Hollnagel’s Efficiency-Thoroughness Trade-Offs (ETTO) concept is highlighted, where individuals and groups must make constant trade-offs between quality and efficiency to meet goals.

These trade-offs can introduce variability; hence inviting technological glitches or failures, latent conditions, missing barriers and more.

It’s said that an important implication of this conceptualisation of drift is the emergent nature of accidents, rather than being resultant phenomena. That is, “they cannot be predicted by linear cause–effect models”.

From Dekker’s work, complex systems are characterised as open systems where each component is ignorant of the behaviour of the rest of the system. Systems have path dependence and are non-linear. The non-linearity means small events can turn into disasters. Dekker linked several complexity phenomena to drift.

These include: 1) resource scarcity and competition, leading to ETTO and local optimisations, 2) Decrementalism, implying constant and gradual adaptations around goal conflicts producing “small, stepwise normalisation of deviations from the previously accepted norm” (p112), 3) Sensitive dependence, where apparently insignificant local changes can propagate as they modulate through the system, 4) Unruly technology, which introduces and sustains uncertainties about how and when things can fail.

The author then discusses how HROs may counter drift differently than others. It’s said some HROs may tend to “reduce variability in inputs and process”, compared to Dekker’s proposals to “[meet] variability with exploration and variability” (e.g. requisite variety).

4. Drift and power. The role of power and politics is still a relatively less-explored area in safety science, despite a few excellent papers (e.g. Antonsen, Dekker and the like).

Vaughan’s account of the Challenger disaster can also be interpreted as an analysis of subtle power mechanisms, where production pressures shape practices.

The author previously proposed that power can be viewed from the environmental conditions for safety work. These are conditions that influence the opportunities an organisation or groups, individual etc. has to control the risk of major accidents.

This is based on senders and receivers. Senders exert strong influences (power), which subsequently influences receivers. Managers, naturally, tend to have stronger ‘send’ influences, thereby shaping safety work downstream based on constraints and the like.

For instance, at BP Texas City, BP senior managers created adverse environmental conditions for safety work via budget cuts etc. These changes “cascaded down the organisational levels and towards the sharp end of operations” (p113).

Drift and rail

Next the author discusses why different conceptualisations of drift could be important for understanding major accidents in rail. This is because:

1) Trains are tightly coupled physically to their infrastructure all the time. Hence, the entire rail system can be viewed as one big machine, and this is unlike other modes of transport (which are less physically coupled to their infrastructure).

2. Railway infrastructure is very expensive, and investments often depend on public funding. They also have long life cycles.

3. Critical safety functions have always been integrated in the infrastructure, and there is a long-term trend towards transferring additional safety functions from humans to the infrastructure.

The author argues that these facets of rail infrastructure relate to what he calls “systemic drift”. Systemic drift is “gradual uncompensated long-term changes of a human– machine–organisation system that lead to an increased accident risk” (p121).

Several Norwegian rail accidents and incidents are then discussed in order to elucidate the different mechanisms of drift. I’ve skipped these discussions, but a few notable points are that:

1) Because there are very few lines left in Eastern Norway with manual traffic control, train drivers and guards gradually lose familiarity with this operating mode.

Over time there was also an increasing chance of ‘‘strong but wrong’’ actions patterns compatible with Centralised Traffic Control [that] might intrude as slips and mistakes when train crew operated on lines with manual traffic control” (p116).

That is, since drivers are so used to operating under centralised traffic control, they may inadvertently take actions appropriate for centralised control rather than manual controls (thereby risking collision).

2) The accidents also revealed that railway work “often revert to more basic operating modes during infrastructure breakdown and planned maintenance and modifications of safety systems” (p117).

These basic operating modes during abnormal modes may lead to “operational complexity, loss of technical barriers, and increased dependence on human performance” (p117). The tasks of drivers and traffic controllers was said to be particularly demanding, and this occurred at a time when “no technical barriers were in place to help the system recover from erroneous actions” (p117).

3) Increased pressures on the whole rail network. Here, large community growth necessitated larger increases in rail traffic. However, given major constraints governing rail infrastructure (space, noise, budget etc.), this often meant that depots and shunting yards and the like had to accommodate larger traffic flow without necessarily any increases of their size.

Thus, infrastructure always lagged behind growth in traffic volume which resulted in personnel having to adapt to suit the context. Further, staff had to use infrastructure in ways that it wasn’t designed for.

Railway safety, drift, and infrastructure

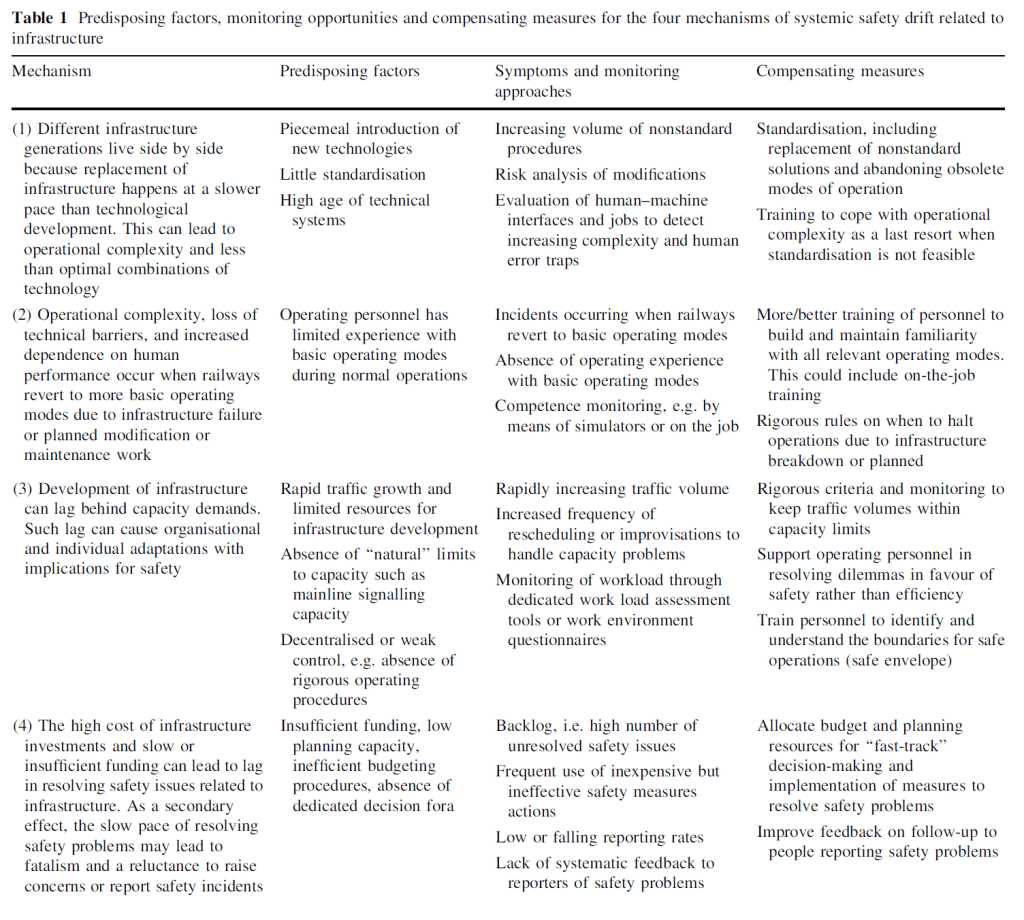

Next the author summarises the accidents to systemic drift.

1) Replacement of infrastructure often occurs at a slower pace than technological development. This means that different infrastructure generations live side by side (e.g. manual control and centralised control).

This leads to higher operational complexity, like multiple sets of traffic safety rules. And it also leads to less than optimal configurations where centralised train control operates without automatic train protection in some circumstances.

2) Reversion to basic operating modes during abnormal functioning leads to operational complexity, loss of technical barriers and an increased dependence on human performance.

3. Development of infrastructure can lag behind capacity demands. This lag can result in organisational and individual adaptations to work and technology that can increase risk and invalidate design assumptions. This slow pace of resolving infrastructure issues may lead to a sense of fatalism and a reluctance to raise safety issues, since the issues may not be addressed anyway.

Drift as gradual adaptation of behaviour on local optimisation was seen in accidents at shunting yards. Epistemological drift, may have occurred as a consequence of normalisation of behavioural adaptations. This may occur also if operations personal fail to communicate safety problems since they don’t expect anything to be fixed.

Drift as an implication of complexity may have some limited relevance based on the analysed accidents. However, resource scarcity and competition was relevant in all accidents. Moreover, complexity was relevant in several cases as a result of local work environments (like ergonomic problems or complex rule systems), rather than resulting from nonlinear system dynamics as proposed by Dekker.

It’s said that rail traffic hardly exhibit nonlinear dynamics.

Drift resulting from power and politics is extremely relevant. This results in legacy issues not being resolved, and technology/infrastructure of different generations living side-by-side. For instance, this results in higher operational complexity, more confusing rule sets, and a lack of standardisation.

Relating to the basic operating mode during abnormal situations (like higher-than-expected rail traffic), this results in loss of technical barriers, increased dependence of people, and increased operational complexity.

A result here is the system operating with rule sets not designed for these situations (but rather designed for normal operating modes). These situations increase risk, particularly if personnel don’t practice operating under these basic modes in their daily work. For instance, if drivers and personal almost exclusively train and work under centralised control, the odd times they need to operate under manual control will be far more hazardous [** this harks back to Bainbridge’s timeless “irony of automation” arguments.]

The author discusses ways to help counter different types of drift. I’ve skipped most of this. But, for one – a focus on training and behaviour he warns isn’t the solution (but can be part of the solution).

Two, it isn’t just more rules – which were implicated in some accidents. Drawing on the model 1 / model 2 distinction of rules, it’s said a narrow focus on model 1, where rules are seen primarily as means of compliance could “fail to produce long-term improvements because they address only a minor part of the mechanism that creates and maintains drift” (p125).

Moreover, the use of sanctions for non-compliance may “even cause operating personnel to keep their behavioural adaptations hidden from management for fear of reprisals and thus make it more difficult for management to ‘‘navigate the safety space’’ (p125).

In concluding, the author remarks:

The drift metaphor could lead to simplistic and stereotyped calls for generic safety management remedies

In contrast, attention to diversity, like with different conceptualisations of drift, may be a starting point for tailoring safety management strategies to the specific system challenges.

Below highlights the key mechanisms of drift in rail based on this sample:

Author: Rosness, R. (2017). Cognition, Technology & Work, 19(1), 109-126.

Study link: https://link.springer.com/article/10.1007/s10111-016-0398-7

LinkedIn post: https://www.linkedin.com/pulse/diversity-systemic-safety-drift-role-infrastructure-ben-hutchinson

One thought on “The diversity of systemic safety drift: the role of infrastructure in the railway sector”