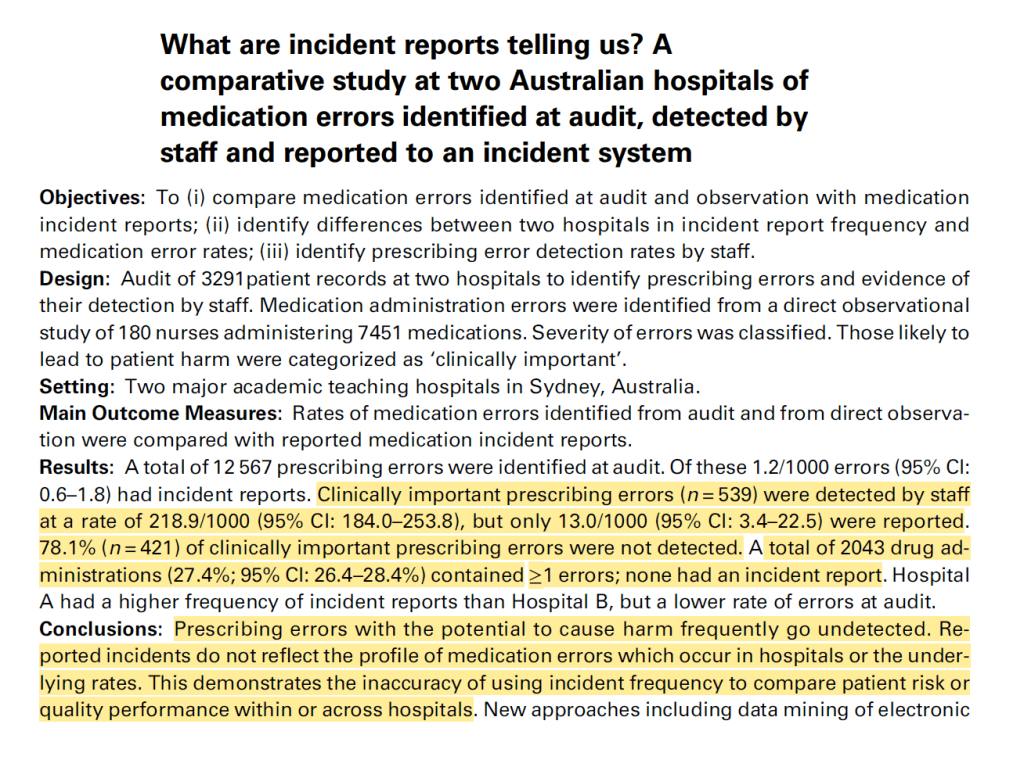

How accurate and comprehensive are incident reporting systems compared to the actual frequency and severity of events that occur? According to this study, not very.

This interesting study compared medication errors of medical personnel (while observed by an observer) to the frequency and types of medication errors and events reported in the official system.

It’s healthcare, but the findings match research from other industries.

Providing background on incident reporting systems, they argue that “under-reporting is a serious limitation …[6–9]. Poor understanding of the strengths and limitations of incident data can lead decision-makers astray”.

Further, “incident report data [is] difficult to interpret, and raised concerns that resources may be misdirected to solve problems that are easily identifiable and more likely to be reported to incident systems”.

These problems are compounded by “small sample sizes for specific incident types, a lack of denominators and advice to monitor ‘trends’ in incident report frequency” and problematically, management tend to “interpret increases negatively [16]. Graphing of incident data may further reinforce perceptions of the significance of small changes in the frequency of incident reports”.

In this study, they found that:

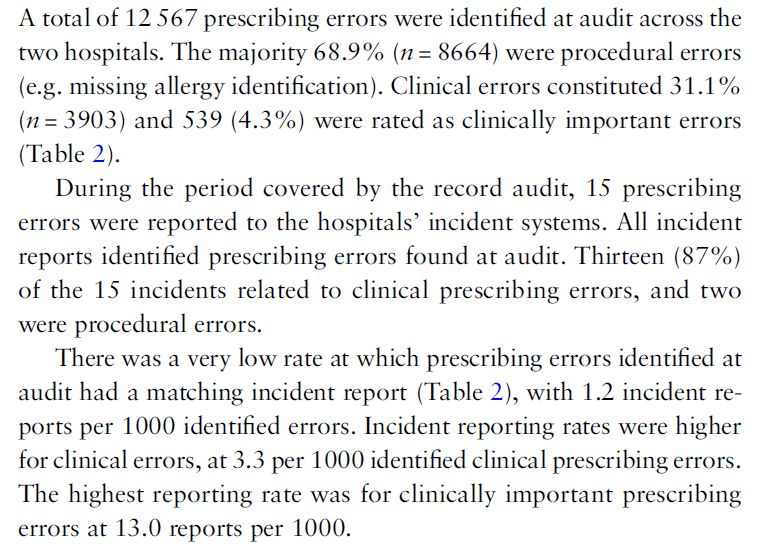

· >12k prescribing errors were identified during ‘audit’ (part of the observation of workers)

· Of these, just 1.2/1000 had an associated incident report

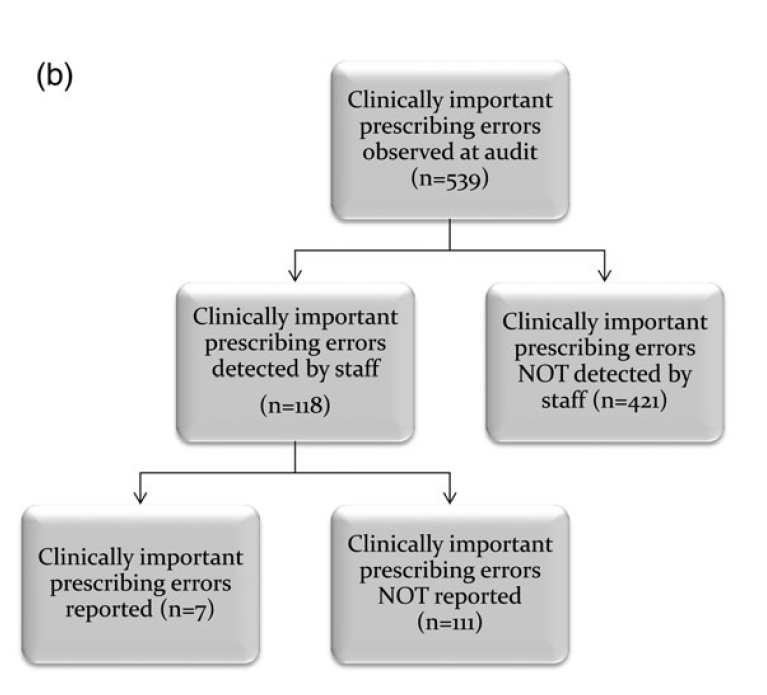

· Clinically important prescribing errors (ones that could be serious) were detected by staff at a rate of 218/1000, but only 13/1000 were reported

· 78% of clinically important prescribing errors were not detected by staff during the task

· Prescribing errors with the potential to result in harm frequently go undetected

· Individual health care incident reporting data “provide an inaccurate reflection of the types or severity of medication errors occurring to patients”

· Hence, “incident data may provide only limited insights into patient risk”

· And “This demonstrates the inaccuracy of using incident frequency to compare patient risk or quality performance within or across hospitals”

· Significant barriers exist to reporting

I think you could sub out the words hospital, prescribing etc. to a matching construction term (project, work at height, confined space) and still be left with the same overall conclusions – incident reporting data doesn’t provide an accurate reflection of site risk and hazardousness, drift, and barriers to reporting exist.

Also linked below are other posts about incident under-reporting and skewed/inaccurate reflections of incident types and severities.

Ref: Westbrook, J. I., Li, L., Lehnbom, E. C., Baysari, M. T., Braithwaite, J., Burke, R., & Day, R. O. (2015). What are incident reports telling us? A comparative study at two Australian hospitals of medication errors identified at audit. International Journal for Quality in Health Care, 27(1), 1-9.

Study link: https://academic.oup.com/intqhc/article/27/1/1/1830060

Other studies:

- https://www.linkedin.com/posts/benhutchinson2_lies-damned-lies-and-incident-statistics-activity-6945863850126635008-kLgz?utm_source=share&utm_medium=member_desktop

- https://www.linkedin.com/pulse/how-does-selective-reporting-distort-understanding-ben-hutchinson

- https://www.linkedin.com/posts/benhutchinson2_data-from-tahira-probst-et-al-on-the-links-activity-7024875041288818688-m4Kv?utm_source=share&utm_medium=member_desktop

- https://www.linkedin.com/pulse/organizational-injury-rate-underreporting-moderating-ben-hutchinson

- https://www.linkedin.com/pulse/pressure-produce-reduce-accident-reporting-ben-hutchinson

2 thoughts on “Inaccuracy and misdirected decisions-making in incident reporting systems”