A fantastic read from Gemma Read, Steven Shorrock, Guy Walker and Paul Salmon discussing the history of core theories and methods over the last 60 years around ‘human error’.

Also discussed was the human error construct within ergonomics & human factors (EHF), some “key conceptual difficulties” that the human error construct faces in a systems era of EHF, and then a way forward is proposed by the authors.

I won’t properly summarise this study (at least not yet), as it’s not only 34 pages of (great) content but I just can’t do it justice. There’s too many insightful comments for me to cover.

However, some points from the paper is that human error is an “intuitively meaningful” construct that can easily be explained to people outside of EHF. They also note that framing actions as errors, such as the unintentional types or omissions, may also help to reduce unjust blame.

Further it’s argued that human error models and taxonomies have been important tools in EHF for decades, have helped to improve human-machine interface design and the design of error tolerant systems, and have probably helped us to get where we are today.

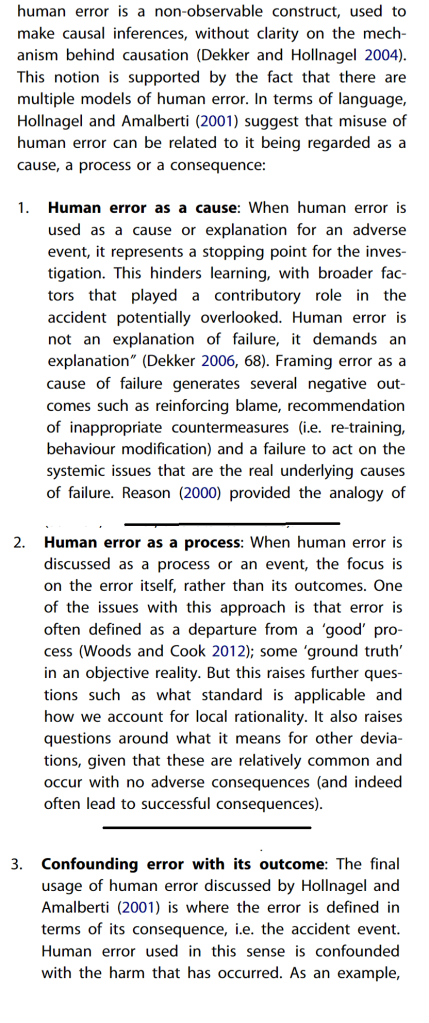

Nevertheless, human error also brings with it some misuses and abuses. Some of these discussed in the paper include:

- A lack of precision in theory and use in language, which can include human error being used to describe a cause or explanation for adverse events. This is noted to present a stopping point in investigations which hinders learning. It can also lead to a focus on the frontline operators rather than on upstream system factors.

- Human error referred to as a process or event, which places focus on the error itself rather than its outcomes. That is, error is seen as a departure from some universal objective truth about what is right. This raises issues about what standard is applicable, how we account for local rationality, and also raises questions about these apparent deviations from “good” that are relatively common and occur with no adverse consequences.

- Confounding error with its outcome, where error is defined in terms of the consequence such as the accident itself. That is, “Human error used in this sense is confounded with the harm that has occurred”. An example being in healthcare where medical harm is conflated with medical error.

- The concept may lead to blame and simplistic explanations of accidents, which, in part, are grounded in concepts of rational actors acting freely and/or acting in linear and deterministic environments, ignoring factors of local rationality and systems factors like emergence, non-linearity, feedback loops and performance variability.

- It may place undue focus on frontline workers. Here they say “Human error models and methods, almost universally, fail to consider that errors are made by humans at all levels of a system (Dallat, Salmon & Goode, 2019). Rather, they bring the analysts focus to the behaviour of operators and users, particularly those on the ‘front-line’ or ‘sharp-end’ such as pilots, control room operators, and drivers” (p25).

- It may result in inappropriate fixes or countermeasures, for instance focused on “fixing” frontline workers such as re-training or education. Further they argue that “the pervasiveness of the hierarchy of control approach in safety engineering and occupational health and safety (OHS) can reinforce the philosophy that humans are a ‘weak point’ in systems and need to be controlled through engineering and administrative mechanisms” (p26).

It’s said that the “misuse and abuse of human error leads to disadvantages which may be slowing progress in safety improvement” (p27).

Also of interest is where the authors discuss how “errors and heroic recoveries emerge from the same underlying processes”. That is, errors also facilitate serendipitous innovation and that trying to flat-out eliminate error via strict controls or automation may “also act to eliminate human innovation, adaptability, creativity and resilience” (p22).

In discussing the way forward, they note that “A recognition that humans only operate as part of wider complex systems leads to the inevitable conclusion that we must move beyond a focus on individual error to systems failure to understand and optimise whole systems” (p33).

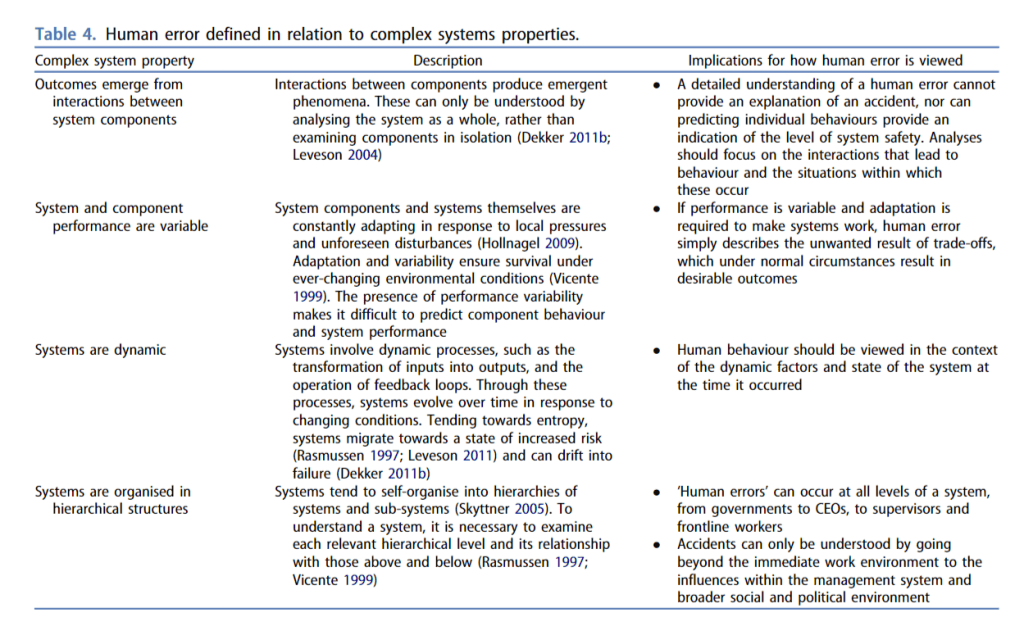

It’s said that a range of systems ergonomics theories and methods exist to support this shift. Here they provide a table of factors of how human error construct is defined in terms of systems thinking – mostly highlighting its inadequacies for complex systems.

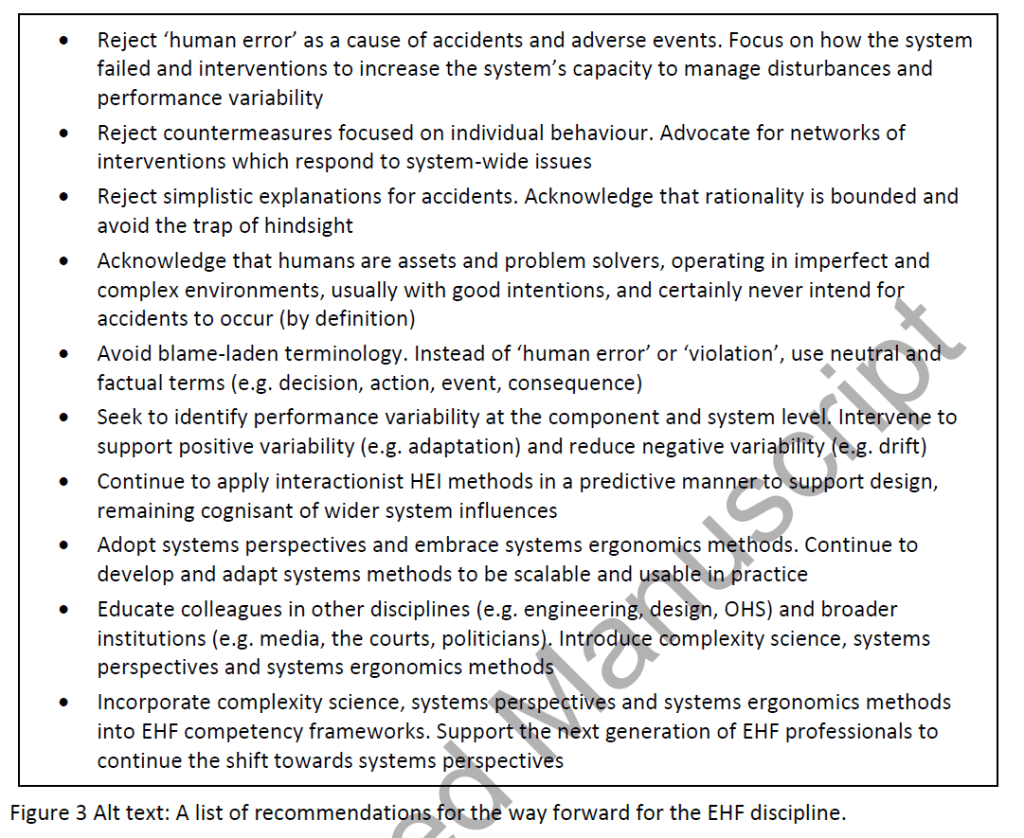

I’ve barely scratched the surface of this paper and I highly recommend you check it out. Usefully, it provides the ways forward in a figure, provided below.

Authors: G. J. M. Read, S. Shorrock, G. H. Walker & P. M. Salmon, 2021, Ergonomics

Study link: https://researchportal.hw.ac.uk/files/50813125/Author_accepted_version_Final.pdf

Link to the LinkedIn article: https://www.linkedin.com/pulse/state-science-evolving-perspectives-human-error-ben-hutchinson

One thought on “State of Science – Evolving Perspectives on ‘Human Error’”