This brief paper from Hollnagel & Macleod may interest you.

Some key points:

- Determining the cause of an accident is a psychological (social) rather than logical (rational) process and can never be completely free of bias

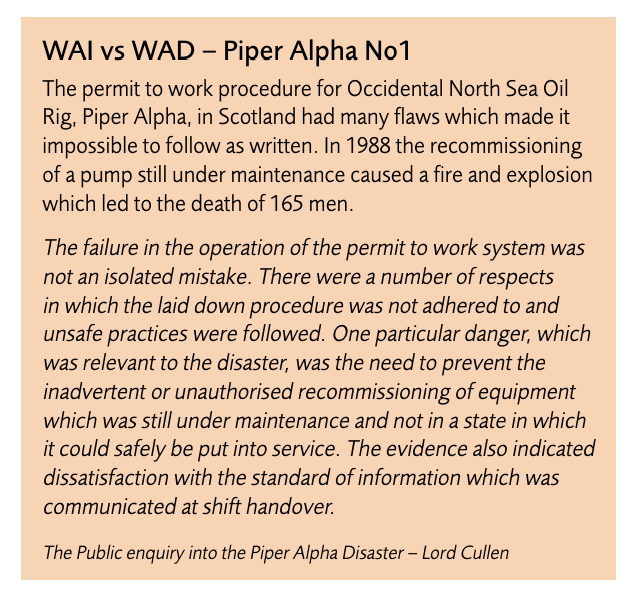

- WAI-WAD: Design, management and analysis of work “tacitly assumes that we know how things are done or should be done”

- In reality, “only in very special cases is real work completely regular or orderly and perfectly described by rules”, and WAD will “always be different from work-as-imagined (WAI) because it is impossible to know in advance what the actual conditions of work will be”

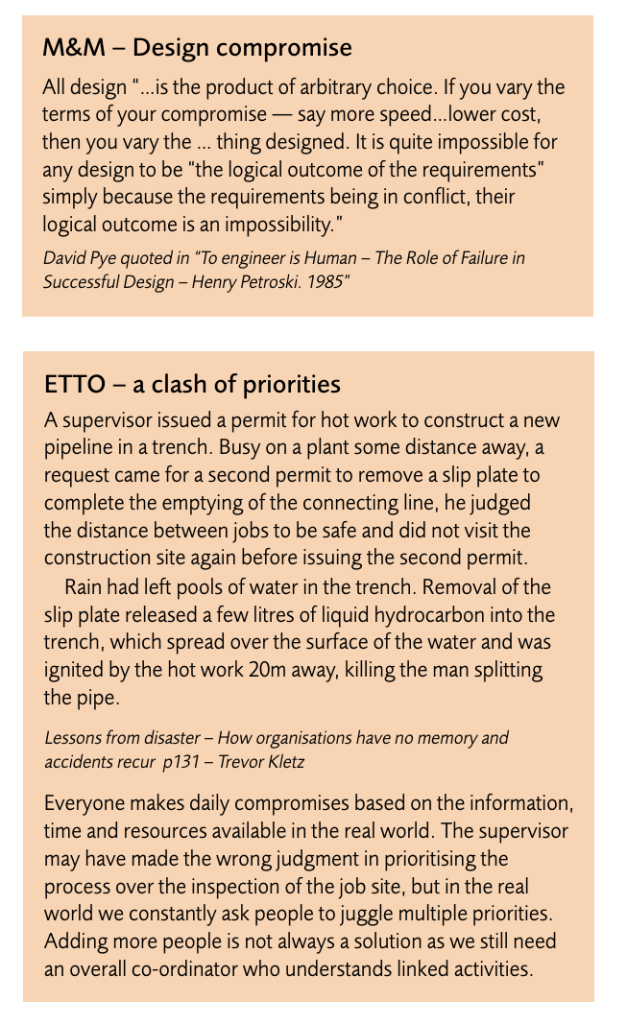

- ETTO (Efficiency Thoroughness Trade off): People need to balance time and resources, and continuously make compromises based on the info, time and resources available

- Decisions on efficiency for individuals is said to not necessarily be conscious, but “rather a result of habit, social norms, experience and established practice”

- In contrast, decisions of efficiency at organisational levels “is more likely to be the result of a direct consideration — although this choice in itself will also be subject to the ETTO principle”

- M&M (Methods & Models): Accident analysis is usually based on pre-defined models, and the models directly or indirectly provide the rationale for the methods but these rationales are rarely visible or explicitly recognised

- The models often have a linear logic based on simple causality where one thing causes another. However, challengingly, “modern process plants are complex, interlocking, intractable systems, designed and run by people — socio-technical rather than pure technical systems. Linear models are no longer adequate, nor is causal reasoning sufficient”

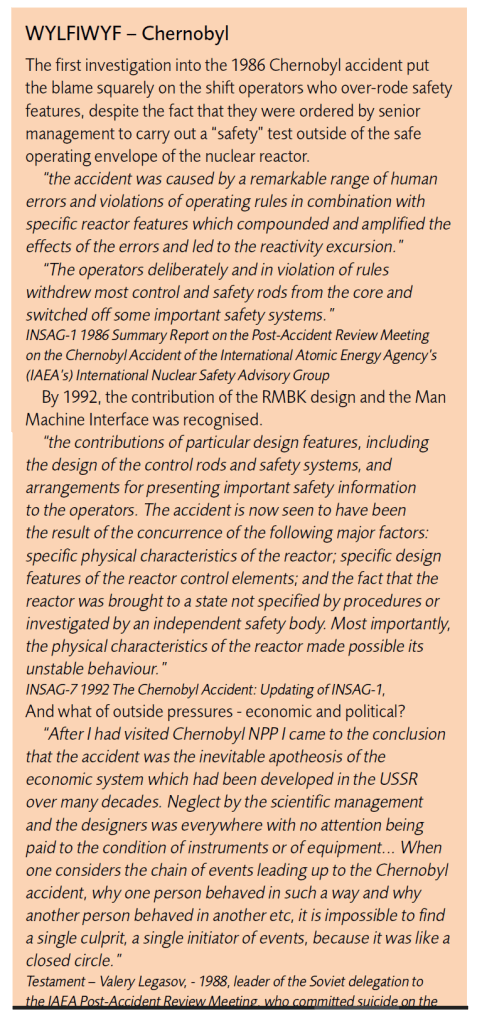

- WYLFIWYF (What-You-Look-For-Is-What-You-Find): The assumptions about causes are, largely, determine what lessons are learned. The assumptions are sometimes explicit but often not. Assumptions about causality have changed over time, for instance the age of technology was an earlier age dominated by accidents caused by technology failure

- Next was an age of human factors – where things go wrong because of people and various human-centric actors; another broad age is of safety management, where things go wrong because organisations fail, including leadership

- These assumptions about what you look for is what you find are influenced by the causality credo. If accidents are thought of as cause-effect then: 1) the accident happens because something failed, 2) the causes of failure can be found, 3) the causes can be eliminated or neutralised, 4) since causes can be found, all accidents can be prevented

- In contrast, they propose a non-linear alternative:

- 1) accidents result from unexpected combinations of normal variability in everyday performance,

- 2) accidents are prevented by monitoring everyday performance and damping variability,

- 3) safety is constant vigilance and unease and the “imagination to anticipate future events”

In sum, they answer three questions:

- Can investigations be free from bias? No. “So long as one group of people investigate the actions of another group of people, there will always be bias, conscious or unconscious”

- Are accident investigations worthwhile? Yes, as long as the primary focus is on accident prevention (ensuring things go well) and recognise limitations of our approaches, as a social response for psychological needs and helping people

- Can we learn from accident investigations? Yes, but this depends heavily on the assumptions and logics at play. If investigations highlight the human cost and the things we face every day; the sense of chronic unease; or if investigations can “chronicle the stories that give us pause”.

Ref: Hollnagel, E., & Macleod, F. (2019). The imperfections of accident analysis. Loss Prevention Bulletin, 270(3), 2-6.

Study link: https://www.icheme.org/media/12669/lpb270_pg02.pdf

My site with more reviews: https://safety177496371.wordpress.com

2 thoughts on “The imperfections of accident analysis”