This Master’s thesis from Philip Thomas around Risk Matrices (RMs) had some interesting sections around the flaws of risk matrices and offers some ‘partial fixes’ and alternatives for consideration.

I covered his conference paper with similar findings a while back if you’re interested (link in comments).

Phil argues that “despite the popularity of RMs, neither its accuracy nor its reliability has been rigorously assessed or reported in the published literature.

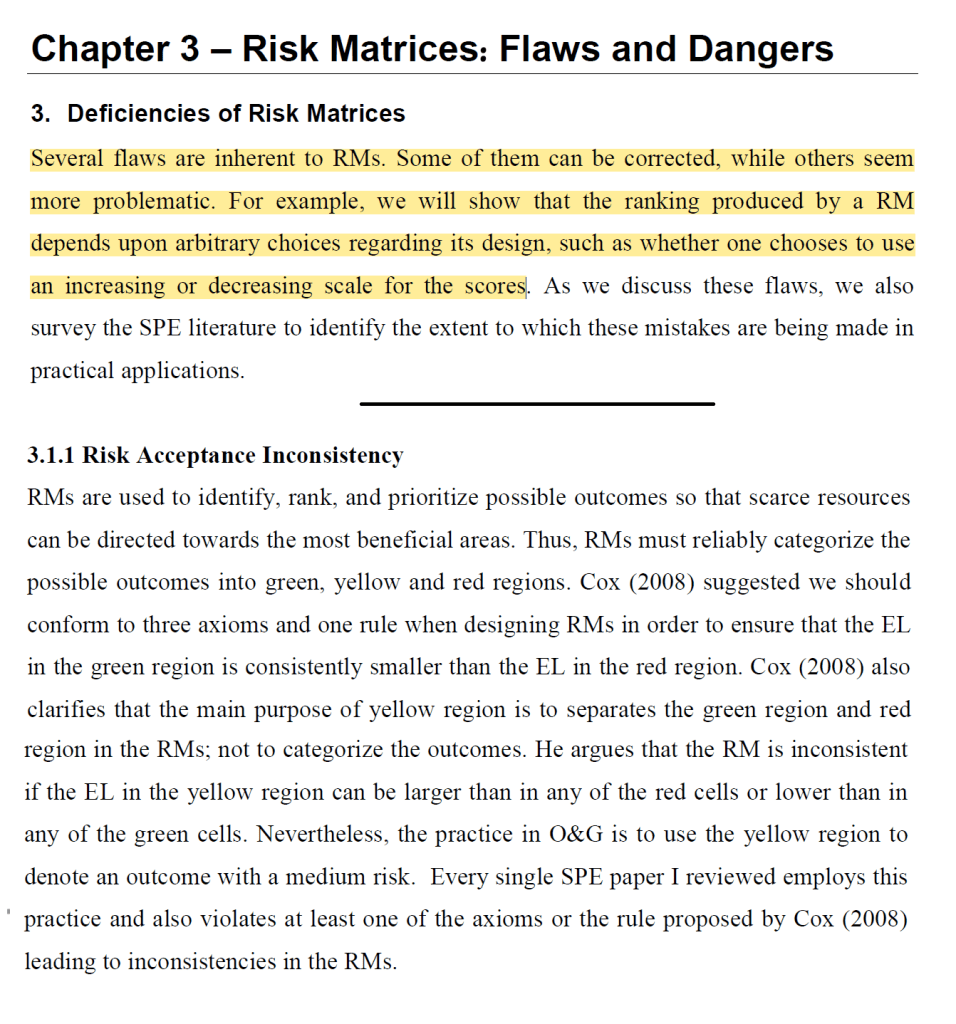

A range of existing or new flaws were covered with matrices, with some new flaws identified by the author: (See attached images)

- Flaws include risk acceptance inconsistency, range compression, centering bias, category definition bias, arbitrary risk ranking, distortion of relative distance

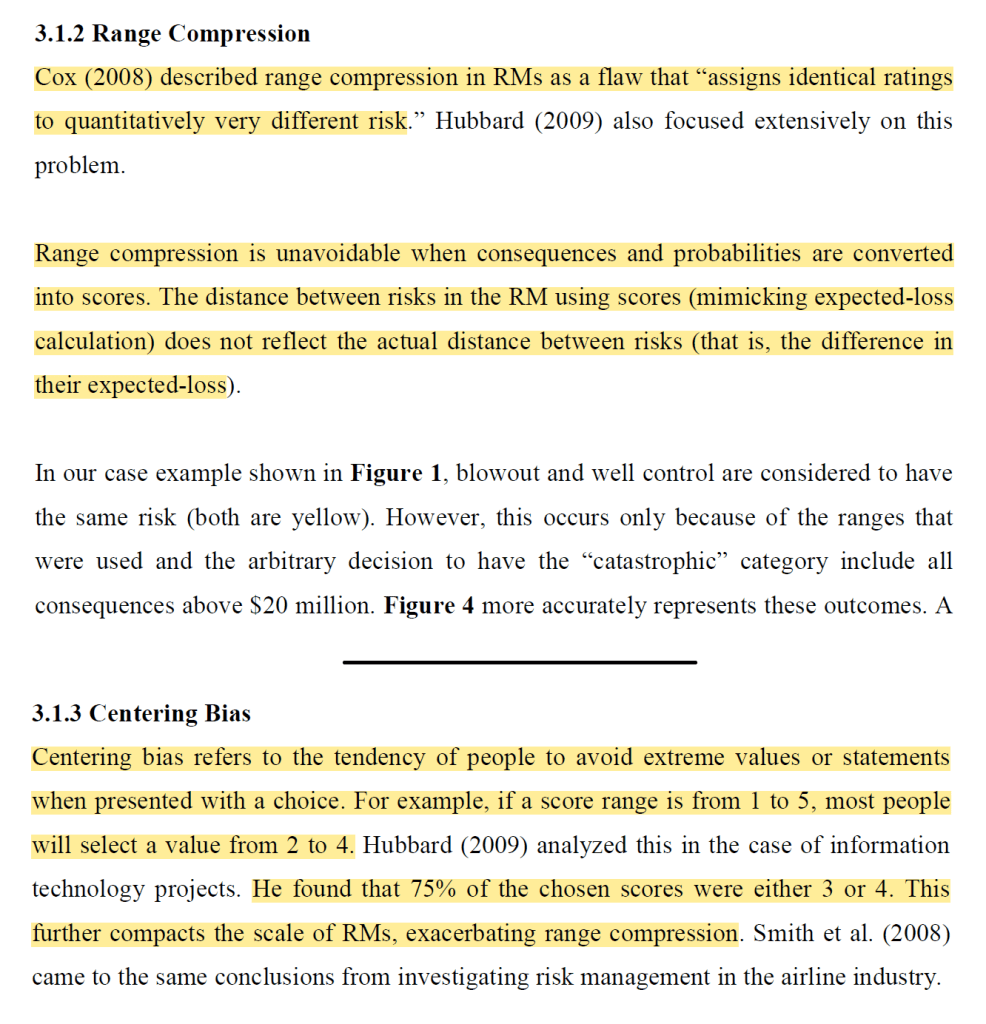

- He provides an example comparing a well blowout to a well loss of control. In that matrix, a blowout appears little different from a loss in well control, as they are “both ‘yellow’ risks after all’

- Hence, “Using the scoring mechanism embedded in RMs compress the range of outcomes and, thus, miscommunicates the relative magnitude of both consequences and probabilities”

- Regarding the centering bias (where people tend to select scores in the mid-range and thereby avoid extremes), he found that between 70-90% of risk scores in the sampled oil & gas research exhibited a centering effect

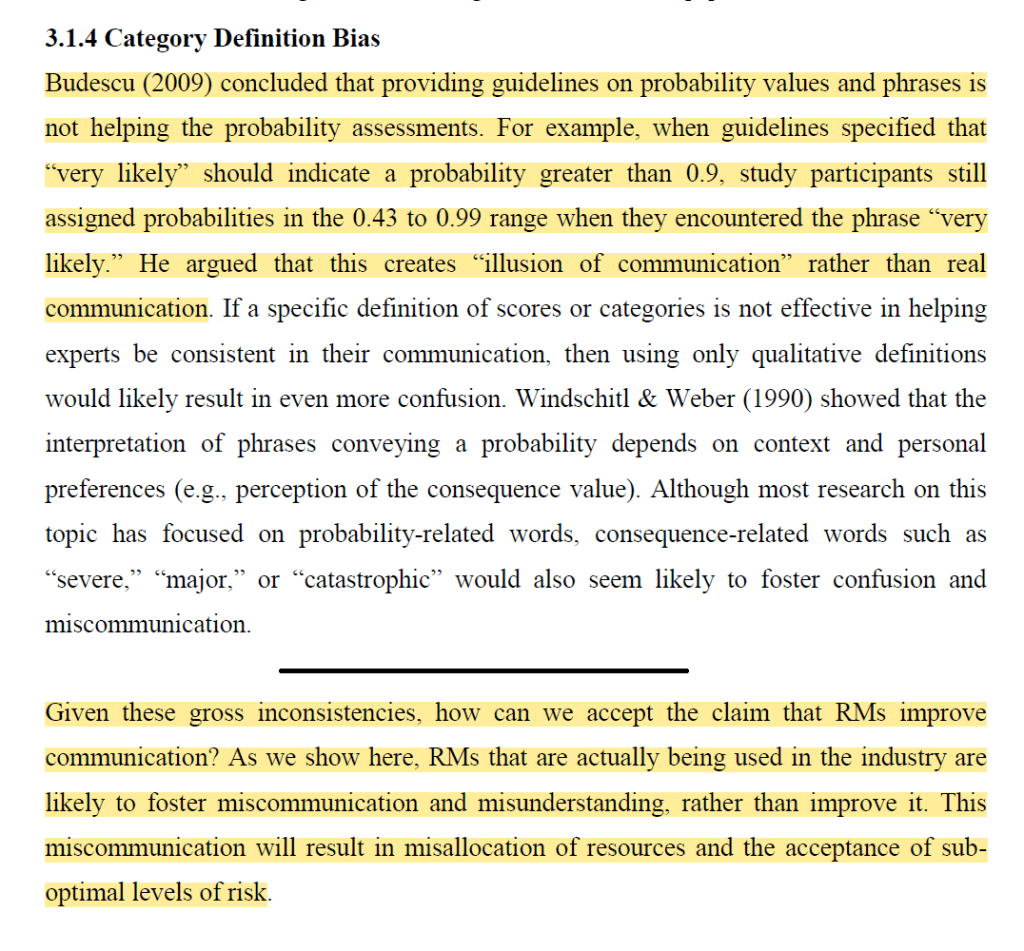

- Phil states that “Given these gross inconsistencies, how can we accept the claim that RMs improve communication?”

- Instead, as his data suggests, RMs “that are actually being used in the industry are likely to foster miscommunication and misunderstanding, rather than improve it. This miscommunication will result in misallocation of resources and the acceptance of sub-optimal levels of risk”

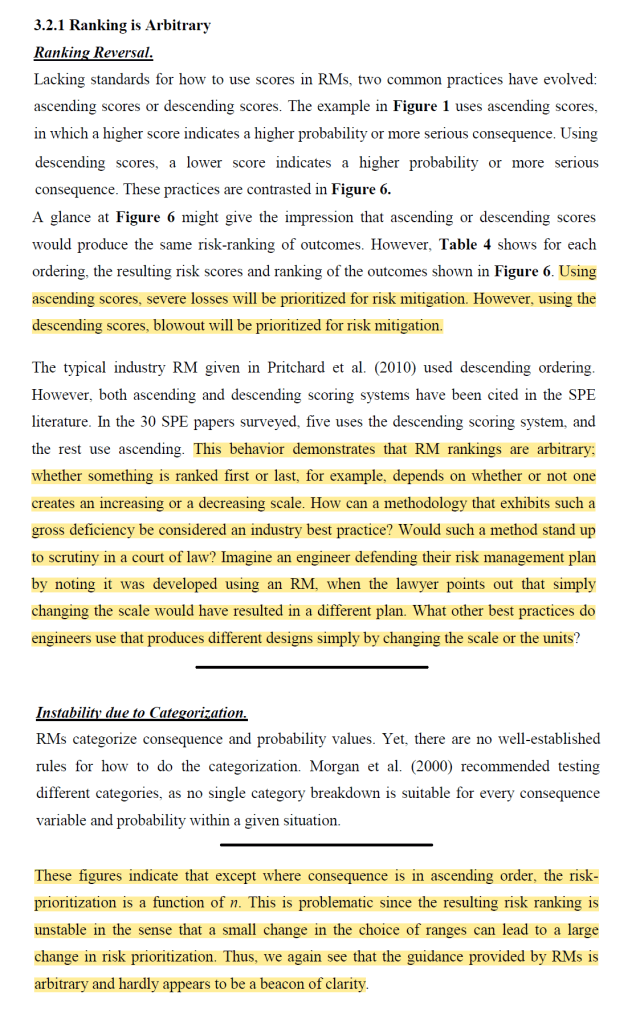

- He next describes how the behaviour of some RM risk rankings “are arbitrary; whether something is ranked first or last, for example, depends on whether or not one creates an increasing or a decreasing scale. How can a methodology that exhibits such a gross deficiency be considered an industry best practice?”

- He next ponders how RMs would stand up to scrutiny in law, and “Imagine an engineer defending their risk management plan by noting it was developed using an RM, when the lawyer points out that simply changing the scale would have resulted in a different plan. What other best practices do engineers use that produces different designs simply by changing the scale or the units?”

- RMs can be problematic since the resultant risk ranking is “unstable in the sense that a small change in the choice of ranges can lead to a large change in risk prioritization. Thus, we again see that the guidance provided by RMs is arbitrary and hardly appears to be a beacon of clarity”

- In sum, RMs can have “inherent dangers” like the above structural flaws. Importantly, some of these flaws “cannot be corrected and are inherent to the design and use of RMs. The ranking produced by RMs was shown to be unduly influenced by their design, which is ultimately arbitrary”

- Interestingly, he argues that “No guidance [as of 2013] exists regarding these design parameters because there is very little to say. A tool that produces arbitrary recommendations in an area as important as risk management in O&G should not be considered an industry best practice”

- Finally, he argues that using RMs may be “better than nothing’, but that is debatable. RMs may help generate discussion of the risks, which is helpful

- But problematically, “RMs obscure rather than enlighten communication”.

He provides some partial fixes and alternative means for suggestion.

Ref: Thomas, P. (2013). The Risk of Using Risk Matrices. Master’s Thesis.

Conference paper: https://www.linkedin.com/pulse/risk-using-matrices-ben-hutchinson

One thought on “The Risk of Using Risk Matrices: Master’s thesis from Philip Thomas”