Hopkins in this discussion paper explores organisational cultures, and how they effect safety. You’ll note he writes cultures, plural, rather than culture as a monolithic construct.

Way too much to cover in this paper, so just a few points. Check out the full paper if the topic interests you.

Hopkins starts with “Despite all that has been written about safety culture, there is no agreement about just what this concept means”. Hence, there is a lot of ambiguity within the study of cultures.

For some people, every organisation has its own “safety culture of some sort, which can be described as strong or weak”, or positive/negative. For others, only those select organisations with an “over-riding commitment to safety can be said to have a safety culture”.

On the latter definition, James Reason remarked that “like a state of grace, a safety culture is something that is striven for but rarely attained”.

What is culture?

For Hopkins argument, he suggests that every organisation has a culture, or more specifically a series of subcultures and cultures can impact safety in some ways (** others use cultural typologies like micro, meso and macro, and more).

Hopkins says that perhaps this is the best known definition of organisational culture “the way we do things around here”; which he says is clearly behaviour focused.

For Hofstede, “shared perceptions of daily practices should be considered to be the core of an organisation’s culture” over values. These aren’t necessarily in conflict though but a matter of emphasis.

For Hopkins, “The way we do things around here” implicitly carries a normative judgement of what is right, appropriate or the accepted way to do something; this is influenced by shared assumptions or values.

Culture and climate

Next he discusses the relationships between culture and climate. Some argue that climate is one subset or a surface and measurable facet of culture, e.g. “climate is a manifestation of culture”, and whereas climate can usually be measured, culture may not be.

How different approaches tackle measurement

Perception surveys are by far the most frequently used strategy in cultural research (** which many are critical of for capturing cultural elements). Surveys are suitable for capturing individual attitudes. He thinks it’s also suitable for studying practices but with the qualifier that surveys are capturing “people’s perceptions rather than what actually happens”.

Climate studies often, but not always, capture quantitative or semi-quantitative data, whereas cultural studies may be far more qualitative. Surveys have limits of course, being relatively superficial, and where many “practices are too complex to be meaningfully described in the words of a survey question”. Surveys also struggle with dynamic processes, like how they solve problems.

Another strategy is for researchers to embed themselves within those environments, taking detailed observations – ethnographic method. Ethnographers try to minimise their impact, but can never be completely divorced from the setting.

Quoting Schein, it’s observed that “one can understand a system best by trying to change it” and “Some of the most important things I learned about (company) cultures surfaced only as reactions to some of my interventionists efforts”. Ethnographic provide richer accounts than other means, but typically take a lot more time, and may include smaller or less broad samples.

Another strategy is drawing inferences based on major accident inquiries and associated information.

Major accident inquiries

First, it’s “of course not possible to provide a complete picture of the culture of an organisation using this method”.

Moreover, per Schein “it is never possible to describe an entire culture” but instead “elements pf culture”; e.g. said to be “groups of practices that hang together in some way”.

Hopkins cites both Turner and Vaughan’s work as representative of using major accident data. For Turner, studying major accidents helped him to identify factors representing a culture of risk denial, but his studies were limited by relying only on major reports and not on detailed inquiries.

Vaughan explored the Challenger space shuttle disaster, and was “quite explicitly a study of organisational culture and how it contributed to disaster”. Vaughan described the normalisation of deviance, a culture of production, and structural secrecy.

Hopkins then discusses two of this studies which unpacked organisational cultures – one relating to the culture of rail organisations in Australia prior to a rail accident (Glenbrook). Hopkins spoke of four main cultural themes in this disaster:

1) The railways were excessively rule focused “in ways that hindered safety”

2) The railway system was organisationally fragmented, where silos existed across the network

3) There was a “powerful culture of punctuality – on-time-running”

4) There was a risk-denying and risk-blind culture

Hopkins says these findings were similar to Turner and Vaughan – risk denial cultures per Turner and NASA’s “culture as a “way of seeing that is simultaneously a way of not seeing” per Vaughan. These are all described as means of risk blindness.

Hopkins remarks that cultural elements aren’t always directly evidenced in accident inquiries, and must be inferred. In the rail example, nobody directly referred to ‘silos’, but was inferred based on their descriptions of fragmentation and occupational isolation. Hence, a “culture of silos seemed a natural way to draw these ideas together”.

Next he questions how can we be confident about these inferences and how well they capture reality? Especially given they were not explicitly identified by witnesses. He discusses different means, including a type of peer check, where people from those cultures comment on the credibility of the description. If they recognise the description as accurate, then this lends some credibility. However, it also has its limitations.

Hopkins talks about how the different models will produce different perspectives. Like from Westrum’s organisational cultures, “the most critical issue for organisational safety is the flow of information”; ‘cultures’ divided into pathological, bureaucratic and generative. Hudson’s expanded typology is described, involving pathological, calculative, bureaucratic, proactive and generative.

NASA’s culture prior to the Challenger disaster was described as ‘bureaupathological’ by Vaughan.

Importantly, ethnographic and desktop ethnographic (like from inquiry analyses) are “not well suited to producing evidence about the impact on safety of the cultural elements it identifies”.

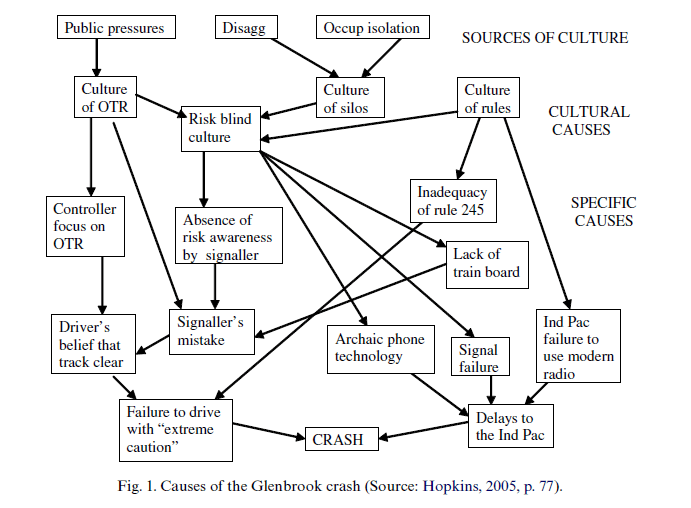

However, Hopkins believes that the latter, analysing major accident data may overcome some of these limitations as the “The links between the cultural elements and the outcome can usually be demonstrated”. He draws on formal logics and counterfactual comparisons. Like, “If it can be concluded that in the absence of the cultural element concerned, the accident would not have occurred, or probably would not have occurred, then the cultural element can be counted as one of the causes of the accident”.

[** I think this is a bit optimistic and simplistic, but, whatever.]

He provides the below as an example, where each arrow is a necessary ‘but-for’ cause: if the start of the arrow didn’t exist then the outcome of the arrow wouldn’t have occurred.

The sources of organisational culture

It’s said by some that “the key to cultural change is leadership and that safety cultures or generative cultures can most easily be brought about by installing leaders who have the appropriate vision”.

He counters that inquiries into organisational culture “reveal that there are often less personal sources of culture which need to be understood and counteracted if there is to be any hope of changing the organisational culture itself”.

That is, organisational cultures may be shaped or rooted in wider societal factors, public demand, constraints etc. “Strong pressure” may be required from external sources, like regulators, industry groups

There may need to be “strong pressure” from other sources, like regulators or industry groups to change the priority of safety decisions within organisations.

He discusses some of the challenges like in NASA with launch decisions, and in the Australian Air Force – where air safety was underpinned by a “powerful technical engineering authority”, and that the “only way ground safety would ever achieve the same status was if there was a similarly powerful ground safety authority within the Air Force”.

Curiously, Hopkins argues that while major accident research calls out the effects of organisational cultures on safety, “Neither the questionnaire technique nor the ethnographic method provide such ready insights” to improve these effects. [** Interested in whether other people agree with this?]

Practical suggestions

Finally, Hopkins offers a few suggestions for understanding and influencing organisational cultures on safety.

One approach is from high reliability organisations / organising. He quotes Weick & Sutcliffe’s five characteristics: a preoccupation with failure; a reluctance to simplify; a sensitivity to operations; a commitment to resilience; and deference to expertise.

But studying all of these in the context of an organisation, or accident, is time consuming.

Another approach is to observe the way the organisation handles information about safety matters; from Westrum’s perspective, this is a critical matter of safety. Turner and Pidgeon’s work also concurs about the role of information flow/misflow in accident incubation: “ “The heart of a safety culture (he says) is the way in which organisational intelligence and safety imagination regarding risk and danger are deployed”.

He also draws on Reason’s definition of a safety culture, which includes reporting and learning. Hence, “Where safety is an organisation’s top priority, the organisation will aim to assemble as much relevant information as possible, circulate it, analyse it, and apply it”

As such, focus can be placed on unpacking an organisation’s reporting practices, e.g. “(who reports, what gets reported and what is done about these reports) and its strategies for learning from accidents, both its own and others”.

Ref: Hopkins, A. (2006). Studying organisational cultures and their effects on safety. Safety science, 44(10), 875-889.

LinkedIn post: https://www.linkedin.com/pulse/studying-organisational-cultures-effects-safety-ben-hutchinson-5xvoc