Incident data is frequently used in organisations for reporting on current and historical performance and for evaluating risk exposure (in a sense). I think it’s often seen as fairly representative of reality, although I think most accept that not all events are reported.

But how coupled to reality is incident data according to published evidence? Some key findings include:

- 80% of experienced accidents in US mine workers went unreported (Probst & Graso 2013).

- 71% of experienced accidents unreported in manufacturing, utilities, health services and hospitality – a ratio of unreported to reported of 2.43 : 1 (Probst & Estrada, 2010).

- Up to 68% of experienced accidents & injuries not captured by OSHA & BLS surveillance systems (Rosenman et al., 2006).

- In US construction, >80% of reportable accidents & injuries were not reported to OSHA in companies with “poor” safety climates but still 47% events went unreported in companies with “good” safety climates (Probst & Brubaker, 2008).

- Certain incidents were more likely to be reported than others – e.g. vehicle events at 73% reported or caught between objects at 28%; compared to slips, trips & falls at 18-20% or contact with HAZMAT at 9.4%. The authors suggest that visibility (and of course, severity) may be a factor in reporting differences (Probst & Graso 2013).

But, surely we can trust more severe incidents as representative? They get reported accurately, right? Of interest is recent work by Kevin Geddert, Sid Dekker and Drew Rae who found that injury severity and recordable classifications were quite poorly coupled.

That is, only ~19% of injuries which were accepted for insurance compensation were classified as “recordable” in the incident management system. Further, only 17% of high severity injuries and 33% of very high severity injuries were classed as recordable injuries.

In their view, “the default assumption for any company should be that reportable injuries are not representative of the severity and nature of actual injuries” (Geddart, Dekker, Rae, 2021, p14).

I concur. I believe we have more than enough evidence to challenge the default confidence we should have in our reporting systems.

Use your data for insights and areas for further deep dives, sure, but as a (misplaced) proxy for risk exposure and reality? To quote Carl Sagan, “extraordinary claims require extraordinary evidence”, and I think we need extraordinary evidence to trust our incident reporting data.

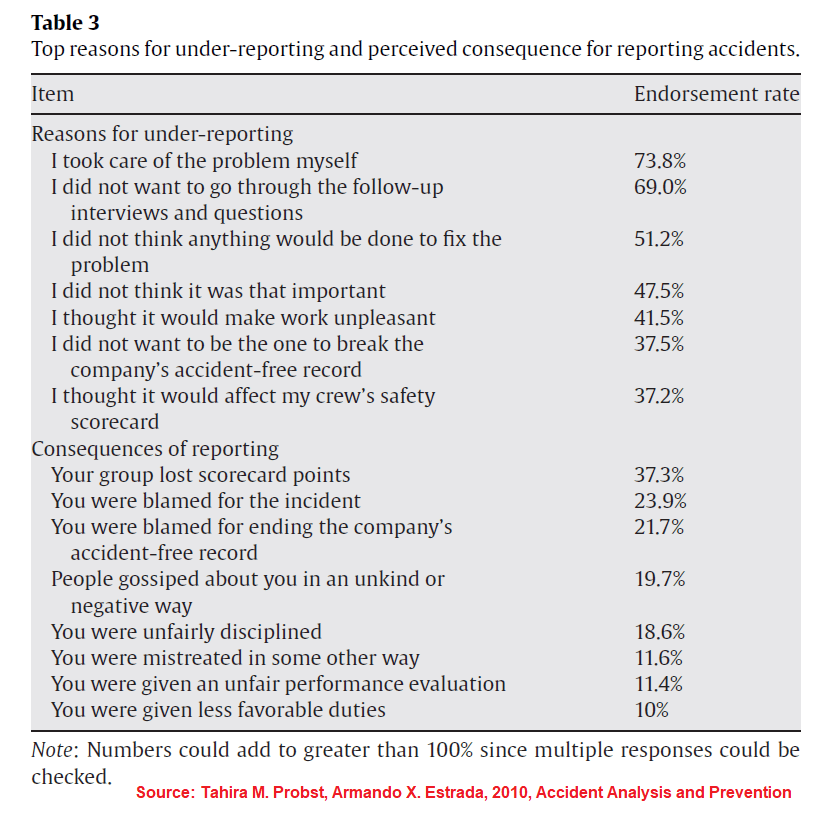

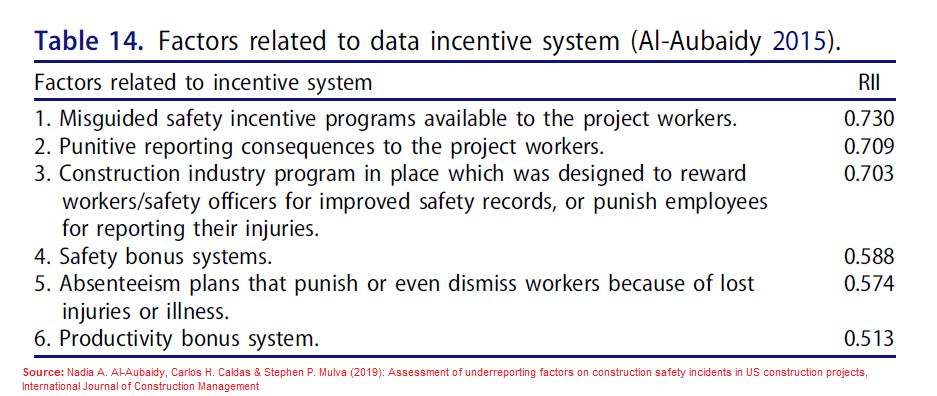

I’ve attached two images which highlight some common factors for underreporting & perceived connection to incentive systems.

1. Image 1 source: https://doi.org/10.1016/j.aap.2009.06.027

2. Image 2 source: https://doi.org/10.1080/15623599.2019.1613211

3. Geddert et. al.: https://doi.org/10.3390/safety7030058

4. Summary of Geddert et al.: https://www.linkedin.com/pulse/how-does-selective-reporting-distort-understanding-ben-hutchinson

5. LinkedIn post: https://www.linkedin.com/posts/benhutchinson2_lies-damned-lies-and-incident-statistics-activity-6945863850126635008-kLgz?utm_source=share&utm_medium=member_desktop

Other summaries on underreporting:

5. https://www.linkedin.com/pulse/safety-culture-moral-disengagement-accident-ben-hutchinson

6. https://www.linkedin.com/pulse/voices-carry-effects-verbal-physical-aggression-ben-hutchinson

7. https://www.linkedin.com/pulse/accident-under-reporting-among-employees-testing-ben-hutchinson

8. https://safety177496371.wordpress.com/2021/02/20/assessment-of-underreporting-factors-on-construction-safety-incidents-in-us-construction-projects

9. https://www.linkedin.com/pulse/pressure-produce-reduce-accident-reporting-ben-hutchinson

First Ben, a great thanks for your reports: it is invaluable for me and I love receiving them by email or seeing the posts on LinkedIn. It is feeding my brain and helping me in my work with clients, so hopefully helping to protect more workers at the end of the day.

Regarding the topics of incident reported, I am wondering about the “country” bias of the data. I am European and even worse French, and as you know our social system is quite different from the US one. Most of the studies comes from US, where the incentives are quite differents. At least for lost-time accident, my feeling is that they are rather well or even over-reported in Europe (because of the social system covering the individual once the accident is recognized), while other recordable accident are clearly under-reported. Have you seen any European studies on the topic?

LikeLiked by 1 person

Yes you raise a really good point. I’ve seen data that goes both ways. On the one hand I’ve seen data that incentives to hide or underreport incidents, and to work through pain, does seem to be lower in Western European countries but there’s other data which contradicts this.

For instance, Probst (2013) compared US and Italian settings and found little difference between underreporting when job insecurity was accounted for. When job insecurity increased, in both settings, then so did underreporting.

However on the balance of the research I’ve read, it seems the incentives to underreport can be somewhat higher in the US compared to Western European nations and Australia/UK (and this is strongly amplified by vulnerable groups like Hispanic workers). I wouldn’t expect the difference to be *that* significant, though.

LikeLike