This paper discusses some of the good, bad and ugly of GenAI use in construction. GenAI “poised to fundamentally transform the Construction and Built Environment (CBE) industry” but also is a “dual-edged sword, offering immense benefits while simultaneously posing considerable difficulties and potential pitfalls” Not a summary – just a few extracts: The Good: · GenAI… Continue reading How generative AI reshapes construction and built environment: The good, the bad, and the ugly

Tag: artificial-intelligence

Large language models powered system safety assessment: applying STPA and FRAM

An AI, STPA and FRAM walk into a bar…ok, that’s all I’ve got. This study used ChatGPT-4o and Gemini to apply STPA and FRAM to analyse: “liquid hydrogen (LH2) aircraft refuelling process, which is not a well- known process, that presents unique challenges in hazard identification”. One of several studies applying LLMs to safety… Continue reading Large language models powered system safety assessment: applying STPA and FRAM

Call Me A Jerk: Persuading AI to Comply with Objectionable Requests

Can LLMs be persuaded to act like d*cks? A really interesting study from Meincke et al. found human persuasion techniques also worked on LLMs. They tested how “classic persuasion principles like authority, commitment, and unity can dramatically increase an AI’s likelihood to comply with requests they are designed to refuse”. I’m drawing from their study… Continue reading Call Me A Jerk: Persuading AI to Comply with Objectionable Requests

Mind the Gaps: How AI Shortcomings and Human Concerns May Disrupt Team Cognition in Human-AI Teams (HATs)

This study explored the integration and hesitations of AI embedded within human teams (Human-AI Teams, HATs). 30 professionals were interviewed. Not a summary, but some extracts: · “As AI takes on more complex roles in the workplace, it is increasingly expected to act as a teammate rather than just a tool” · HATs “must develop a shared… Continue reading Mind the Gaps: How AI Shortcomings and Human Concerns May Disrupt Team Cognition in Human-AI Teams (HATs)

How Much Content Do LLMs Generate That Induces Cognitive Bias in Users?

This study explored when and how Large Language Models (LLMs) expose the human user to biased content, and quantified the extent of biased information. E.g. they fed the LLMs prompts and asked it to summarise, and then compared how the LLMs changed the content, context, hallucinated, or changed the sentiment. Providing context: · LLMs “are… Continue reading How Much Content Do LLMs Generate That Induces Cognitive Bias in Users?

Cut the crap: a critical response to “ChatGPT is bullshit”

Here’s a critical response paper to yesterday’s “ChatGPT is bullshit” article from Hicks et al. Links to both articles below. Some core arguments: · Hick’s characterises LLMs as bullshitters, since LLMs “”cannot themselves be concerned with truth,” and thus “everything they produce is bullshit” · Hicks et al. rejects anthropomorphic terms such as hallucination or confabulation, since… Continue reading Cut the crap: a critical response to “ChatGPT is bullshit”

Using the hierarchy of intervention effectiveness to improve the quality of recommendations developed during critical patient safety incident reviews

This study evaluated the Hierarch of Intervention Effective (HIE) for improving patient safety incident recommendations. They were namely interested in increasing the proportion of system-focused recommendations. Data came from over 16 months. Extracts: Ref: Lan, M. F., Weatherby, H., Chimonides, E., Chartier, L. B., & Pozzobon, L. D. (2025, June). Using the hierarchy of intervention… Continue reading Using the hierarchy of intervention effectiveness to improve the quality of recommendations developed during critical patient safety incident reviews

ChatGPT is bullshit

This paper challenges the label of AI hallucinations – arguing instead that these falsehoods better represent bullshit. That is, bullshit, in the Frankfurtian sense (‘On Bullshit’ published in 2005), the models are “in an important way indifferent to the truth of their outputs”. This isn’t BS in the sense of junk data or analysis, but… Continue reading ChatGPT is bullshit

ChatGPT in complex adaptive healthcare systems: embrace with caution

This discussion paper explored the introduction of AI systems into healthcare. It covers A LOT of ground, so just a few extracts. Extracts: · “This article advocates an ‘embrace with caution’ stance, calling for reflexive governance, heightened ethical oversight, and a nuanced appreciation of systemic complexity to harness generative AI’s benefits while preserving the integrity of… Continue reading ChatGPT in complex adaptive healthcare systems: embrace with caution

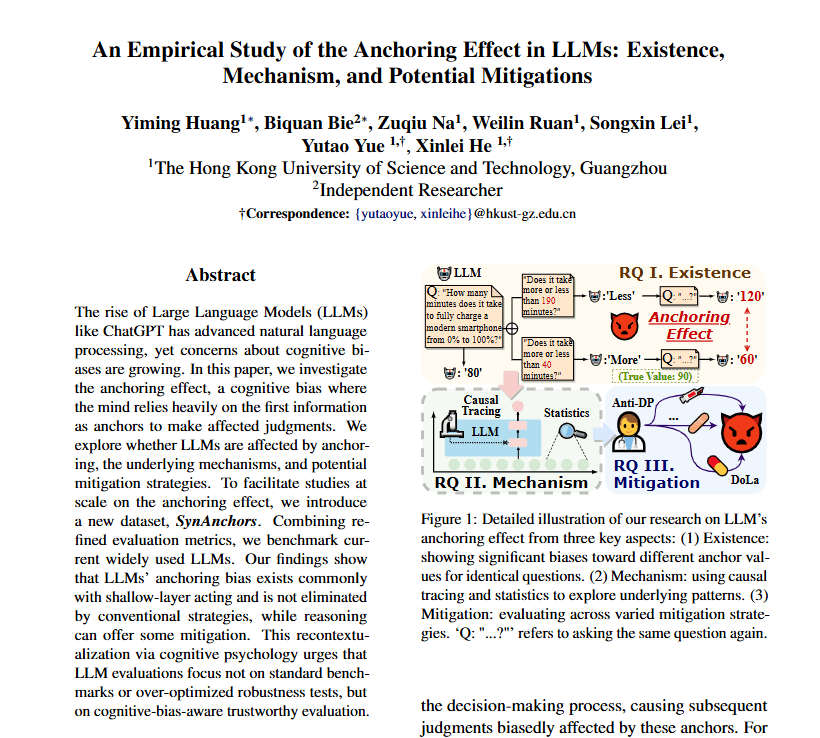

An Empirical Study of the Anchoring Effect in LLMs: Existence, Mechanism, and Potential Mitigations

This study found that several LLMs are fairly easily influenced by anchoring effects, consistent with human anchoring bias. Extracts: · “Although LLMs surpass humans in standard benchmarks, their psychological traits remain understudied despite their growing importance” · “The anchoring effect is a ubiquitous cognitive bias (Furnham and Boo, 2011) and influences decisions in many fields” · “Under uncertainty,… Continue reading An Empirical Study of the Anchoring Effect in LLMs: Existence, Mechanism, and Potential Mitigations