A couple more extracts from Hannigan et al.’s paper on ‘botshit. Bullshit is “Human-generated content that has no regard for the truth, which a human then uses in communication and decision-making tasks”. Botshit is “Chatbot generated content that is not grounded in truth (i.e., hallucinations) and is then uncritically used by a human in communication… Continue reading Bullshit vs Botshit: what’s the difference?

Tag: llm

AI, bullshitting and botshit

“LLMs are great at mimicry and bad at facts, making them a beguiling and amoral technology for bullshitting” From a paper about ‘botshit’ – summary in a couple of weeks. Source: Hannigan, T. R., McCarthy, I. P., & Spicer, A. (2024). Beware of botshit: How to manage the epistemic risks of generative chatbots. Business Horizons, 67(5), 471-486.… Continue reading AI, bullshitting and botshit

Mind the Gaps: How AI Shortcomings and Human Concerns May Disrupt Team Cognition in Human-AI Teams (HATs)

This study explored the integration and hesitations of AI embedded within human teams (Human-AI Teams, HATs). 30 professionals were interviewed. Not a summary, but some extracts: · “As AI takes on more complex roles in the workplace, it is increasingly expected to act as a teammate rather than just a tool” · HATs “must develop a shared… Continue reading Mind the Gaps: How AI Shortcomings and Human Concerns May Disrupt Team Cognition in Human-AI Teams (HATs)

How Much Content Do LLMs Generate That Induces Cognitive Bias in Users?

This study explored when and how Large Language Models (LLMs) expose the human user to biased content, and quantified the extent of biased information. E.g. they fed the LLMs prompts and asked it to summarise, and then compared how the LLMs changed the content, context, hallucinated, or changed the sentiment. Providing context: · LLMs “are… Continue reading How Much Content Do LLMs Generate That Induces Cognitive Bias in Users?

Cut the crap: a critical response to “ChatGPT is bullshit”

Here’s a critical response paper to yesterday’s “ChatGPT is bullshit” article from Hicks et al. Links to both articles below. Some core arguments: · Hick’s characterises LLMs as bullshitters, since LLMs “”cannot themselves be concerned with truth,” and thus “everything they produce is bullshit” · Hicks et al. rejects anthropomorphic terms such as hallucination or confabulation, since… Continue reading Cut the crap: a critical response to “ChatGPT is bullshit”

ChatGPT is bullshit

This paper challenges the label of AI hallucinations – arguing instead that these falsehoods better represent bullshit. That is, bullshit, in the Frankfurtian sense (‘On Bullshit’ published in 2005), the models are “in an important way indifferent to the truth of their outputs”. This isn’t BS in the sense of junk data or analysis, but… Continue reading ChatGPT is bullshit

ChatGPT in complex adaptive healthcare systems: embrace with caution

This discussion paper explored the introduction of AI systems into healthcare. It covers A LOT of ground, so just a few extracts. Extracts: · “This article advocates an ‘embrace with caution’ stance, calling for reflexive governance, heightened ethical oversight, and a nuanced appreciation of systemic complexity to harness generative AI’s benefits while preserving the integrity of… Continue reading ChatGPT in complex adaptive healthcare systems: embrace with caution

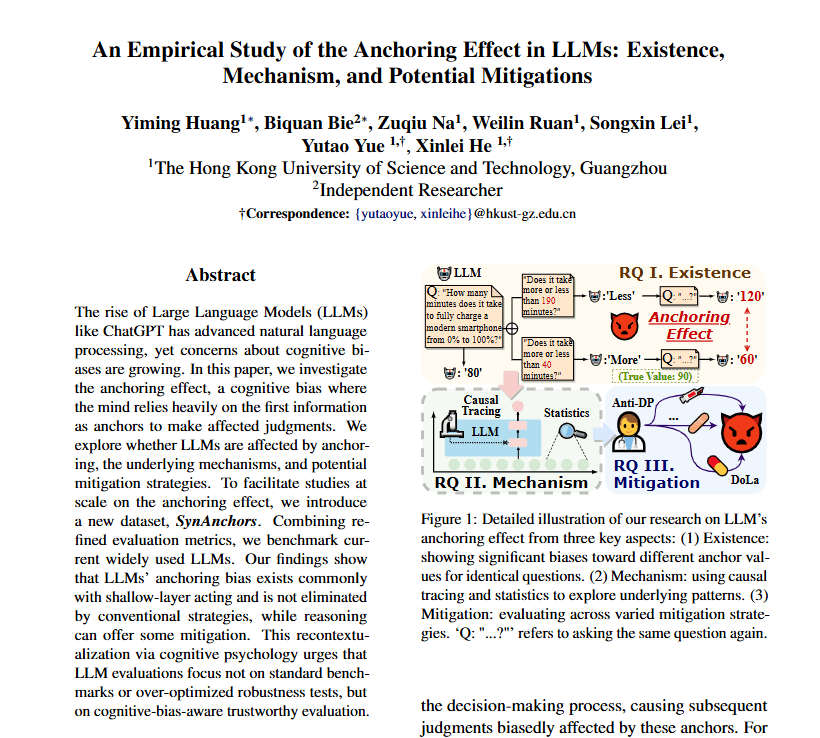

An Empirical Study of the Anchoring Effect in LLMs: Existence, Mechanism, and Potential Mitigations

This study found that several LLMs are fairly easily influenced by anchoring effects, consistent with human anchoring bias. Extracts: · “Although LLMs surpass humans in standard benchmarks, their psychological traits remain understudied despite their growing importance” · “The anchoring effect is a ubiquitous cognitive bias (Furnham and Boo, 2011) and influences decisions in many fields” · “Under uncertainty,… Continue reading An Empirical Study of the Anchoring Effect in LLMs: Existence, Mechanism, and Potential Mitigations

LLMs Are Not Reliable Human Proxies to Study Affordances in Data Visualizations

This was pretty interesting – it compared GPT-4o to people in extracting takeaways from visualised data. They were also interested in how well the LLM could simulate human respondents/responses. Note that the researchers are primarily interested in whether the GPT-4o model acts as a suitable proxy for human responses – they recognise there are other… Continue reading LLMs Are Not Reliable Human Proxies to Study Affordances in Data Visualizations

ChatGPT for analysing investigations

I think this is one of the better uses of LLMs regarding investigations – they trained their model to evaluate accident reports and extract key details from the reports. They found: · It could extract key information from unstructured data and “significantly reduce the manual effort involved in accident investigation report analysis and enhance the overall… Continue reading ChatGPT for analysing investigations